The “new” Google Search Console rolled out to webmasters in 2018. You may have received a message called “Introducing the new Search Console (beta)” when this occurred or read about it on Google’s blog here.

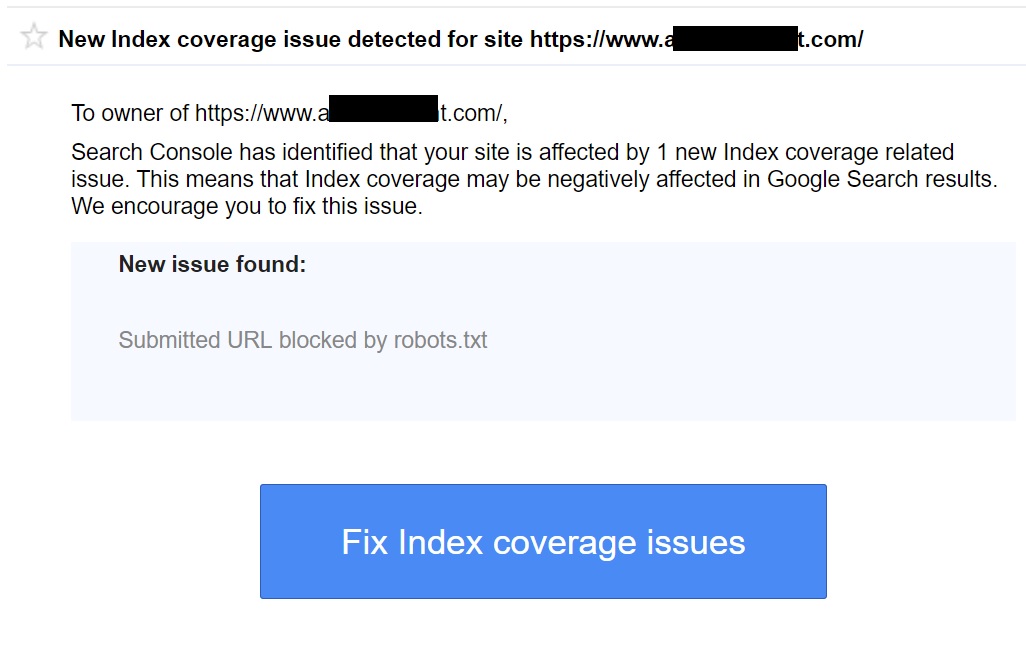

Since then, a scary warning has gone out to thousands of websmasters. That warning, or error, reads “New Index coverage issue detected for site www.YourDomain.com” and says a new issue was found such as “Submitted URL blocked by robots.txt”.

It looks like this:

What does “New Index coverage issue detected for site” mean?

This could mean anything, but in most cases your site map lists a file or folder such as “/images/” which is blocked by your robots.txt file. The robots.txt file blocks search engines from crawling parts of a website. In many cases a robots.txt file will block all sorts of things such as an admin login screen. Wannabe hackers are always scanning the web for these pages and keeping them out of search results prevents unnecessary hack attempts.

It is however always wise to go ahead and click on “Fix index coverage issues” to see which resources have been blocked. Every time I have seen this message, what was blocked was one single irrelevant file or folder and I completely ignored the message. One error like this is not going to cause your rankings to tank in search or harm your web presence in any way, shape or form.

- Google “Pure Spam” Penalty Deindexes Sites March 6 2024 - March 12, 2024

- What Happened to ChicagoNow.com? - August 30, 2022

- The December 2021 Google Local Pack Algorithm Update - December 17, 2021

I have facing “New Index coverage issue detected for site” same issue on my site but i dont know how to fix it. any help

I am facing the same issue, some of the URL’s are blocked by Robots.txt. How to overcome from this situation. Please let me know.

In your case, you have some URLs blocked by robots.txt. There are just a couple of them, and it looks intentional. This is likely what Google is referring to.

This is the most beneficial blog and updated me with new facts. I really feel happy by reading your blogs.

Hi there, i just got this and the owner wants to me to look in to it but i am having trouble… How do i fix this? Please advise. Thank you.

“Coverage issues detected on https://modernstudio.com/“

Please feel free to email me about this if you’re still having issues.

If you do not get it fixed please feel free to email me.

thanks for sharing very knowledgeable information.

I have already manage New Index coverage issue.

Thanks for sharing as types of article.

Thanks for sharing such a great blog… I am impressed with you taking time to post a nice info.

That’s an informative article. Upscale your business of sending greeting cards or gift box sets to loved ones by learning from special Illustration designed to help you grow as an artist and create a unique vast collection of your own.

Thanks for sharing such a great blog… I am impressed with you taking time to post a nice info.

Hi there! I’m running into the same issue — I received the “New Index coverage issue detected for site” alert in Google Search Console and noticed that some of my URLs are blocked by robots.txt. I’m not sure how to fix it. Could someone explain how to identify which URLs are affected and how to resolve this blockage? Thanks in advance!

Thanks for sharing very knowledgeable information.Post more content like this.